Neuromorphic computing

Neuro-inspired AI to optimize learning and computing efficiency of next generation AI

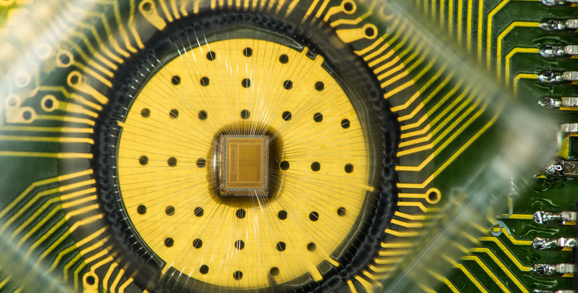

In-memory computing

Memory devices, compute cores and applications such as deep learning and neuro-symbolic AI

High-Speed I/O Links

Designing the next generation High-Speed I/O Links targeting low power consumption, low latency and small silicon area

Tape research

Storage solutions for Big Data

Neuro-Vector-Symbolic Architecture

Processing with vectors for incremental and fast larning, and transparent computing

Snap machine learning

A library that provides high-speed training of popular machine learning models on modern CPU/GPU computing systems

Cloud storage

Cloud storage technology & software-defined storage

Future non-volatile memory systems

Enhancing storage performance and reliability

Join our team

We are currently looking for highly motivated and enthusiastic software engineers and researchers.

Contact

Robert Haas

Department head